A quick elementary post which is my entry to this months’ T-SQL Tuesday entry hosted by a good friend SQLDoubleG http://www.sqldoubleg.com/2017/07/03/tsql2sday-92-lessons-learned-the-hard-way/.

We are here to talk about mistakes we used to make. There is one mistake that I am going to discuss and is something that I used to do 10 years ago, obviously I do not do this anymore.

I USED TO SHRINK DATABASES

There, I said it! Why did I do this? Well I never knew any better. Why shouldn’t you do this?

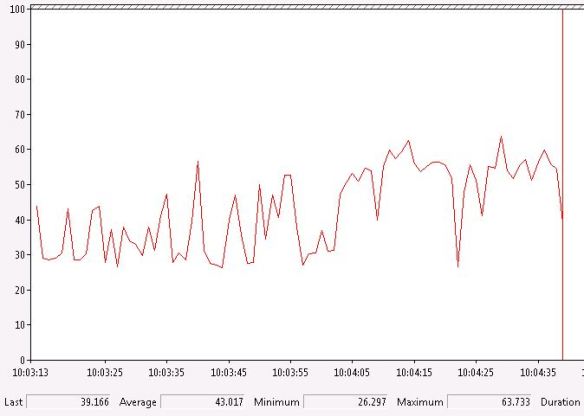

It consumes CPU.

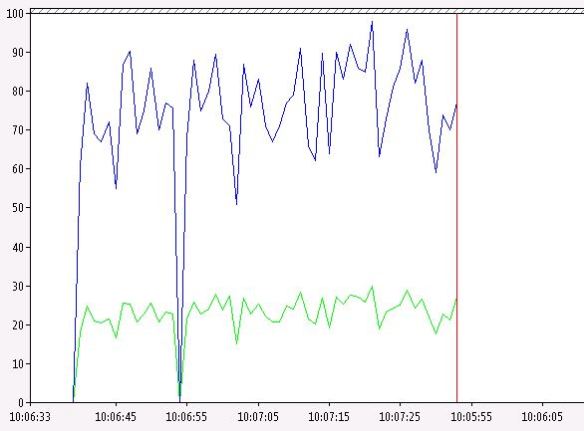

It is I/O hungry – Top blue line is physical reads and the other one is physical writes.

Fragmentation – a lot of it.

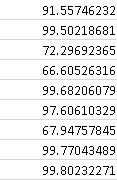

This is a list of the external fragmentation percentages after a shrink operation for a specific table with many indexes on it.

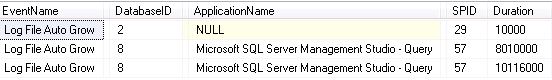

It works the transaction log hard. Below is a query I tend to use to query the default trace looking for log file growth.

SELECT TE.name AS [EventName] ,

T.DatabaseName ,

t.DatabaseID ,

t.NTDomainName ,

t.ApplicationName ,

t.LoginName ,

t.SPID ,

t.Duration ,

t.StartTime ,

t.EndTime

FROM sys.fn_trace_gettable(CONVERT(VARCHAR(150), ( SELECT TOP 1

f.[value]

FROM sys.fn_trace_getinfo(NULL) f

WHERE f.property = 2

)), DEFAULT) T

JOIN sys.trace_events TE ON T.EventClass = TE.trace_event_id

WHERE te.name = 'Log File Auto Grow'

OR te.name = 'Log File Auto Shrink'

ORDER BY t.StartTime ;

There is no real need for this operation as a day to day task. I know it is very easy to do, a couple of clicks via SQL Server Management Studio (SSMS). If you have some space within your database just leave it. You will probably need it in the future.

Pingback: T-SQL Tuesday #92, Lessons learned the hard way. Wrapping up – SQLDoubleG