Another re-post of a video from last year, this time showing you an in-built protection of setting max server memory for your SQL Server. You can clearly see that if you enter a silly figure such as 50 MB, the minimum memory amount allowable for max server memory is 128 MB. You will see SSMS (SQL Server Management Studio) change it to 128MB.

Tag Archives: memory

Azure SQL Database – Optimizing For Ad-hoc Workloads

Today I found out that it is now possible to enable the setting optimize for ad-hoc workloads at the database level when using Azure SQL Database. Traditionally this was always set at the server level for locally based SQL Servers.

Task Manager is a liar

I remember asking a question at a recent training event, the outcome? I now don’t use task manager to try and understand how much memory SQL Server is consuming. Why? Well it has the ability to lie, and as you will see, it can lie really well. (Depending on settings)

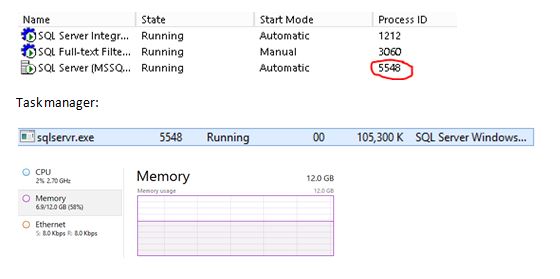

Let’s look at my environment, SQL Server is running under Process ID 5548.

Right, so I am using 58% of my server memory and looking at SQL Server it is consuming approx. 105MB? Well, it’s not. It doesn’t make any sense.

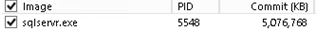

Use resource monitor to really know what is going on.

Resource monitor shows:

Why this is so inaccurate (for my case) is because of the use of Lock Pages in Memory – Task manager does not track memory that is allocated using the AllocateUserPhysicalPages() API which is what this setting does.