Data engineers, pipe line developers, general data enthusiasts will be spending most of their time within a notebook. Here you develop your code, nice visualisations and commentary boxes are possible too, a very rich web-based interface and is best experienced with google chrome ( in my opinion).

You can do so much with notebooks, from widgets, visualisations and dashboards. Here let’s create a simple one, attach it to the cluster and run some commands.

Typically, there is a relationship with a workspace, these are per environment for accessing all of your Databricks assets which will include your notebook and other things like experiments. Within my workspace I access my notebook which has some elementary code. Before that you need to start and attach the cluster.

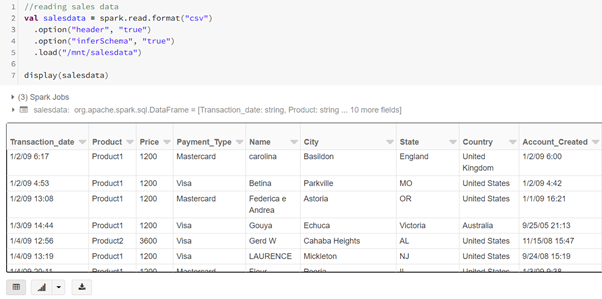

My language of choice = SCALA.

In the first CELL I check to see the built-in datasets.

In the next cell I am reading a basic CSV file. (Naturally I needed to mount the storage beforehand using shared access signatures to Azure Data Lake).

It is a very mature web UI experience.