Every major model out there can summarise documents, write code and answer multi step questions – then if you decide to go with a specific vendor based on costs then great, but I don’t.

Doom and gloom?

I have read many books such as life 3.0 and If anyone builds it everyone dies and yes its doom and gloom for most of it where much is discussed around the warnings of alignment failure and lack of governance and guard rails. Some will read these books and dismiss it as sci-fi but once I read through these books it was too hard to dismiss. So I got thinking, which company out there is treating AI safety at its core – that lead me to Anthropic and its Constitution.

The Constitution that changed my thinking

The Constitution covers honesty, avoiding harm, being helpful and being transparent about uncertainty. Claude will tell you when its unsure, it will refuse to do things that could cause harm not because someone developed a filter but because it’s a core principle of the model itself. I haven’t done the Constitution justice here you should read it for yourself https://www.anthropic.com/constitution but you will probably see why I am so curious about exploring Claude further.

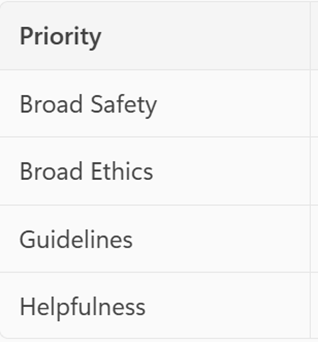

However, if there is one important concept to take from today it’s the priority order of the Constitution below

Safety first, then ethics, then Anthropic’s rules, then user helpfulness.

Claude is exactly the model I want to use.

Thanks god, a post that is less than 1000 words.

I hope this will receive more traction.

I also hope that the Antropic’s constiution maker will understand that it’s time to cut the constitution in 20 Palantir’s style bullet points .

…the only way to compete with Palantir.

Otherwise we are doomed….

LikeLike

thanks – That was key trying to cut it short and get to the point. It’s so big I got lost in the ethics section. Where can I find info about this 20 style from palantir ? I only know about their Ai on RAILs

LikeLike

Here: https://x.com/PalantirTech/status/2045574398573453312I like the style, not the content.

LikeLike