Over the past 6 months I have been trying to push myself to use extended events (XEvents) over SQL trace, once you get past the learning curve it’s probably the way to go. If you are operating in the Azure space then you have no choice. Extended events are what you will need to use if you want to collect information against SQL database. There are some subtle differences on how you write the T-SQL between SQL database (Azure) and your locally installed SQL Servers.

Category Archives: Azure

Clearing Plan Cache in SQL Database (Azure)

Sometimes when in a testing mode I do like to clear cache within my SQL database (Azure). Nowadays a lot of my test databases are Azure based so how I clear cache is different to how I would do it with an older “earthed” SQL Server.

Looking at my locally installed SQL Server.

SELECT @@VERSION

Getting some basic information about the cache for a specific database.

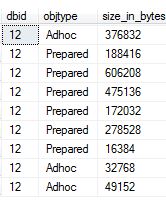

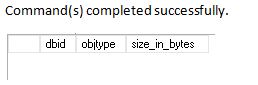

SELECT st.dbid, cp.objtype, cp.size_in_bytes FROM sys.dm_exec_cached_plans AS cp CROSS APPLY sys.dm_exec_sql_text(cp.plan_handle) st WHERE dbid = 12 OPTION (RECOMPILE);

Normally I would run:

-- Database_ID DBCC FLUSHPROCINDB (12);

I have cleared it out.

So, lets go to the Azure world.

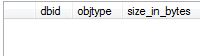

SELECT @@VERSION

Again, get some basic information for my database ID.

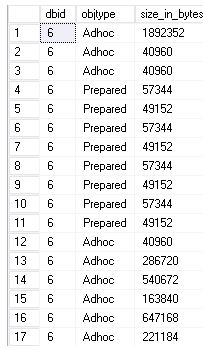

SELECT st.dbid, cp.objtype, cp.size_in_bytes FROM sys.dm_exec_cached_plans AS cp CROSS APPLY sys.dm_exec_sql_text(cp.plan_handle) st WHERE dbid = 6 OPTION (RECOMPILE);

-- Database_ID DBCC FLUSHPROCINDB (6);

Just does not happen: Msg 15247, Level 16, State 14, Line 9

User does not have permission to perform this action – I am not sure what permission I need? I am logging in as a server admin.

So I used the following instead.

ALTER DATABASE SCOPED CONFIGURATION CLEAR PROCEDURE_CACHE ;

Locking Resources in Azure

I look at locking resources within Azure as a safety mechanism where it can protect users from accidental deletion, it can get quite confusing within the portal if for example you have close to 100 databases.

There are 2 types of lock resources in Azure.

- Delete – Obviously you can’t delete but you can read / modify a resource, this applies to authorised users.

- ReadOnly – Authorised users can read a resource but they cannot edit or delete it.

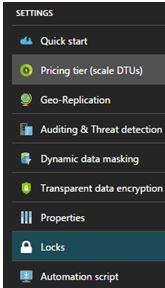

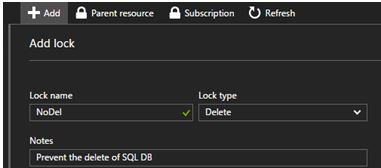

For this blog post I create a delete lock on one of my SQL Databases. Under the settings of your SQL database you will see the LOCKS option.

You will then want to click ADD.

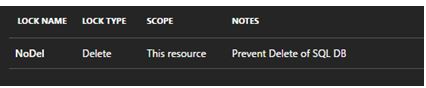

That’s it – let’s put it to a test

Message from the portal:

The lock named NoDel was unable to be added or edited for the following reasons: {“jqXHR”:{“readyState”:4,”responseText”:”{\”error\”:{\”code\”:\”AuthorizationFailed\”,\”message\”:\”The client does not have authorization to perform action ‘Microsoft.Authorization/locks/write’ over scope

Well, that is not what I want! You must be Owner or User Access Administrator.

Once you have addressed the permission issues just click OK.

You know how the saying goes – An ounce of prevention is worth a pound of cure.

I use Elastic database pools – for now

When you have many SQL databases that are required to run your environments and they show signs of specific usage patterns and some of the databases requires different performance levels then how can you ensure that the all the databases get the performance resource that they need within a specified budget?

Elastic database pools could be the answer because databases in an elastic pool consume but they do not exceed the limits of the pool, so both your cost and performance levels remain predictable.

As shown by the screen shot below there are three tiers to select from. They are basic, standard and premium tiers. Each tier imposes limits on the pool and the individual databases within the pool.

Terminology

This is probably the right time to discuss the meaning behind some key terms.

- DTU Stands for Database Transaction Unit. As quoted from https://azure.microsoft.com/en-gb/documentation/articles/sql-database-service-tiers/#understanding-dtus this is “a unit of measure in SQL Database that represents the relative power of databases based on a real-world measure: the database transaction”, the higher the DTU means higher the throughput capability.

- eDTU Elastic Database Transaction Unit has a similar concept to the above but applies to elastic database pools only. From looking at the above diagram you may notice this unit is used at the pool level (eDTUs / pool) and database level (min/max eDTUs).

- eDTU MIN (database level setting). The minimum number of eDTUs from the pool that a database in the pool is guaranteed at any time.

- eDTU MAX (database level setting). The maximum number of eDTUs that any single database in the pool may use – this is NOT a resource guarantee but a ceiling limit.

- POOL eDTU- The Max eDTUs available and shared by all databases within the pool.

My Setup

Elastic pools work for me because of the way I am using them. I use them to build environments. For example, I need to build 11 environments – each needs just 1 SQL database meaning I need 11 databases; I could go for the 11 single approach or consider using 1 pool with 11 databases within it.

This is the cost for standard single databases.

Cost for Standard elastic pool.

Based on the environment requirements and the amount of work going through it and testing I decided that 2 key environments will need to be S2 edition and the other 9 will be S0.

The databases are not going to be big; we are talking about 6.5GB per database which means 71.5GB worth of disk space.

With these facts in mind let’s crunch some numbers.

Cost comparison

Based on https://azure.microsoft.com/en-gb/pricing/details/sql-database/ and using GBP this is the cost comparison of the elastic pool versus purchasing individual SQL databases.

Standard 100 elastic pool = £136/month = £1632/YEAR

Single database cost for 9 S0 databases and 2 S2 databases = (9 x £9.1811) + (2 x £45.81) = £82.63 + £91.62 = £174.52/month = £2094/YEAR

Potentially the more databases you can add to a pool the greater your savings (assuming that you are still within you selected pool‘s limit), but you will need to work out yourself whether or not elastic pools makes financial sense for you.

Database utilisation patterns

The key to using elastic database pools is that you must understand the characteristics of the databases involved and their utilisation patterns, if you do not understand this then the idea of using an elastic database pool may cause problems.

The maximum amount my pool has is 100 eDTUs, I know for a fact that the S2 databases will not be used at the same time, the other S0 databases might be used at the same time at the most 3 of them at the same time. Basically what I am saying here is that I know that when the databases concurrently peak I know that it will not go beyond the 100 eDTU limit.

If for example my S2 database and 8 S0 databases peak at the same time then I will go well beyond my limits – but I know this won’t happen.

Another important consideration is the maximum storage allowed for a Standard 100 pool, this is currently set to 100GB and my solution is well within that limit. If the databases do grow then I would need to upgrade to standard 200 pool which will cost me £272 / month. So at the moment it makes financial sense for me.

Copying your SQL Database

If you have created a SQL Database in Azure (PaaS) and need to make a copy of it on the same server I will show you how via a quick method.

When connected to your master database execute the following: It creates SAP2 based on SAP.

CREATE DATABASE SAP2 AS COPY OF SAP

To track the progress you can use the following query.

SELECT * FROM sys.dm_database_copies WHERE partner_database = 'SAP2'

Replication state 1 means seeding, this means that the SAP2 database has not yet completely synchronised with the original database. 0 means pending, which means that the copy is being prepared.

Once this has completed you can check sys.databases to confirm that the database has successful been created.

SELECT * FROM sys.databases WHERE name = 'SAP2'

If you really want you can query sys.dm_operation_status to see the operations that have been performed on a database. I would expect to see some sort of operation around copying on my original database.

SELECT * FROM sys.dm_operation_status WHERE major_resource_id = 'SAP' ORDER BY start_time DESC;

![]()

If you are interested in other Azure based SQL queries check out an older post of mine: https://blobeater.wordpress.com/2016/10/18/azure-sql-database-dmvs/