I like row versioning– see this link for more details: https://technet.microsoft.com/en-us/library/ms189122(v=sql.105).aspx

If your database is enabled for one of the isolation levels that uses row versioning and a row is updated it will have 14 bytes added to it.

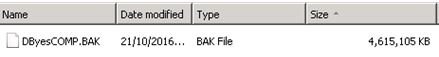

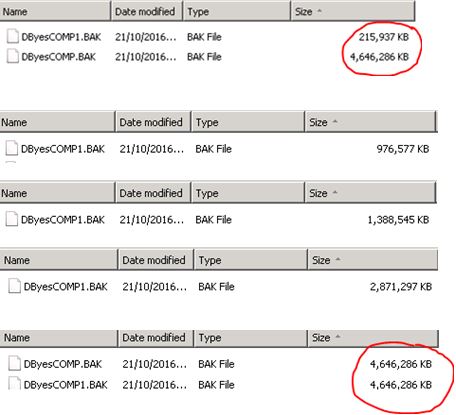

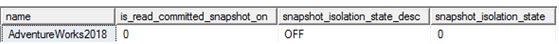

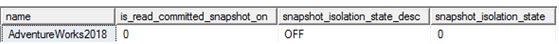

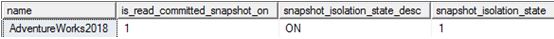

Before anything is enabled:

SELECT name,is_read_committed_snapshot_on, snapshot_isolation_state_desc,

snapshot_isolation_state

FROM sys.databases WHERE database_id = 18

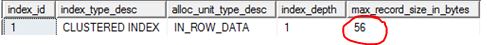

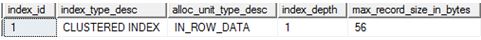

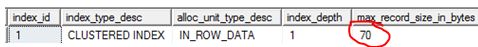

Let’s check a table before enabling anything.

select

index_id, index_type_desc,alloc_unit_type_desc,index_depth,max_record_size_in_bytes

from sys.dm_db_index_physical_stats (db_id('AdventureWorks2018'),

object_id('AWBuildVersion'), null, null, 'DETAILED')

Switch it on:

ALTER DATABASE [AdventureWorks2018]

SET READ_COMMITTED_SNAPSHOT ON WITH ROLLBACK IMMEDIATE;

GO

ALTER DATABASE [AdventureWorks2018]

SET ALLOW_SNAPSHOT_ISOLATION ON

GO

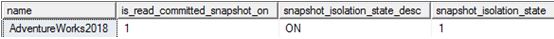

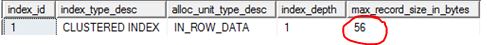

SELECT name,is_read_committed_snapshot_on, snapshot_isolation_state_desc,

snapshot_isolation_state

FROM sys.databases WHERE database_id = 18

Let’s do some work (UPDATE STATEMENT)

UPDATE [dbo].[AWBuildVersion]

SET ModifiedDate = GETDATE()

GO

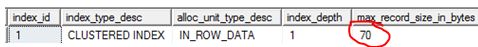

select

index_id, index_type_desc,alloc_unit_type_desc,index_depth,max_record_size_in_bytes

from sys.dm_db_index_physical_stats (db_id('AdventureWorks2018'),

object_id('AWBuildVersion'), null, null, 'DETAILED')

56 +14 = 70, meaning we have a 14 byte overhead. I did some research on this and I ended up going to my book self and dug out the SQL Server 2012 Internals text book, the 14 byte overhead is needed for “the actual pointer to the file, page, and row in tempdb which needs 8 bytes and 6 bytes are needed to store the XSN” (Chapter 13. Page 823)

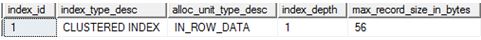

What happens if I disable row versioning and run an update?

ALTER DATABASE [AdventureWorks2018]

SET READ_COMMITTED_SNAPSHOT OFF

GO

ALTER DATABASE [AdventureWorks2018]

SET ALLOW_SNAPSHOT_ISOLATION OFF

GO

UPDATE [dbo].[AWBuildVersion]

SET ModifiedDate = '2009-10-13 00:00:00.000'

GO

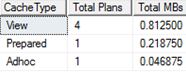

select

index_id, index_type_desc,alloc_unit_type_desc,index_depth,max_record_size_in_bytes

from sys.dm_db_index_physical_stats (db_id('AdventureWorks2018'),

object_id('AWBuildVersion'), null, null, 'DETAILED')

There is no longer the 14 byte overhead – due to the fact that row versioning is no longer on.