When you want to change configuration settings for your SQL Server you would either do it via Management studio (under server properties) or via TSQL calling on sp_configure.

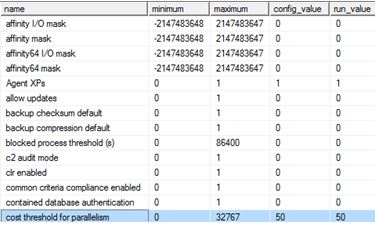

For example, I usually change the cost threshold for parallelism from the low value of 5 which is what I will show you now on my SQL Server, plus with a side-effect.

The side-effect is that your plan cache will get flushed, yes it really does.

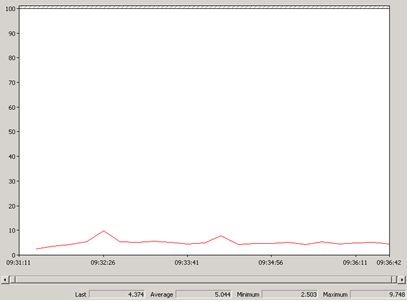

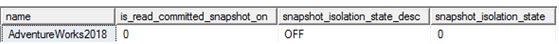

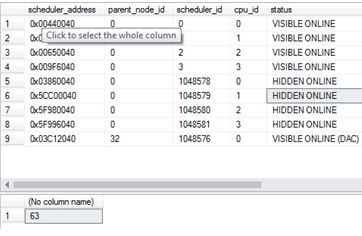

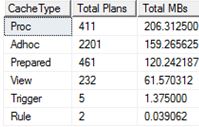

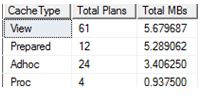

Let’s check cache before changing the setting (adapted from Kimberly Tripp http://www.sqlskills.com/blogs/kimberly/plan-cache-and-optimizing-for-adhoc-workloads/)

SELECT objtype AS [CacheType], COUNT_BIG(*) AS [Total Plans], SUM(CAST(size_in_bytes AS DECIMAL(18, 2))) / 1024 / 1024 AS [Total MBs] FROM sys.dm_exec_cached_plans GROUP BY objtype ORDER BY [Total MBs] DESC GO

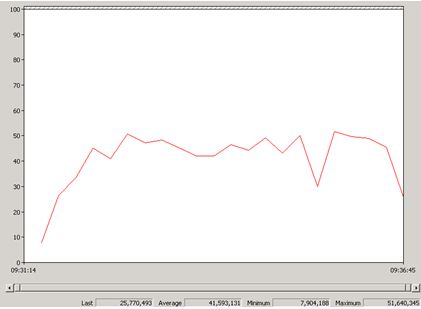

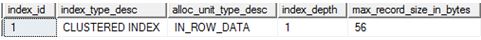

Let’s now change the setting and re-check cache.

EXEC sp_configure 'cost threshold for parallelism', '50' RECONFIGURE;

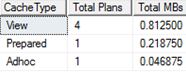

SELECT objtype AS [CacheType], COUNT_BIG(*) AS [Total Plans], SUM(CAST(size_in_bytes AS DECIMAL(18, 2))) / 1024 / 1024 AS [Total MBs] FROM sys.dm_exec_cached_plans GROUP BY objtype ORDER BY [Total MBs] DESC GO

Yes, it has flushed. I couldn’t find anything on books on line about this behaviour. However it was mentioned in the following whitepaper: http://msdn.microsoft.com/en-us/library/dn148262.aspx under the flush entire plan cache section page 22 where RECONFIGURE command is mentioned. I am not sure if this applies to all options or a subset of them, more testing is required.

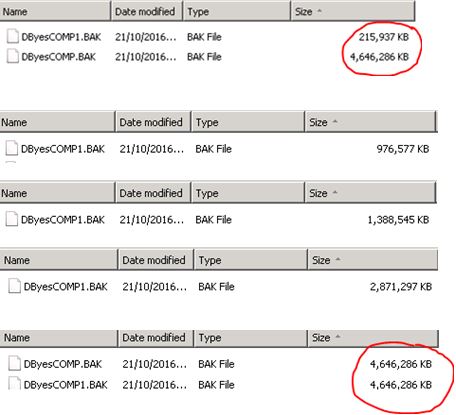

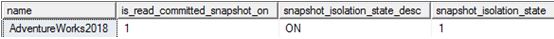

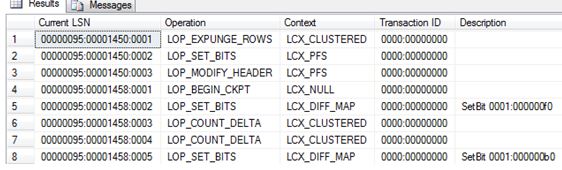

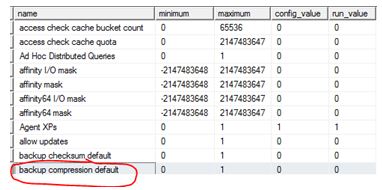

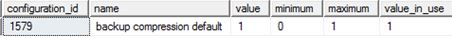

I was intrigued so I did one more test – enabling backup compression which is currently off.

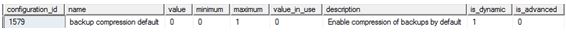

Or you can check it this way:

SELECT * FROM sys.configurations WHERE name = 'backup compression default' ; GO

On the same test server my cache now looks like:

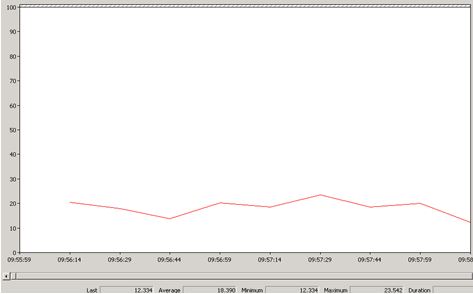

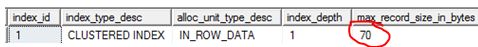

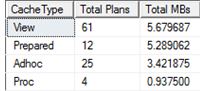

Let’s enable backup compression and re-check.

EXEC sp_configure 'backup compression default', 1 ; RECONFIGURE GO

Well, no effect this time.

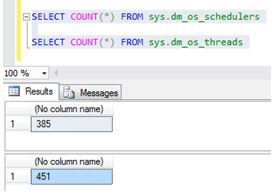

SELECT objtype AS [CacheType],

COUNT_BIG(*) AS [Total Plans],

SUM(CAST(size_in_bytes AS DECIMAL(18, 2))) / 1024 / 1024 AS [Total MBs]

FROM sys.dm_exec_cached_plans

GROUP BY objtype

ORDER BY [Total MBs] DESC

GO

This is something that I would personally talk about to a group of people and is my entry to https://sqlbek.wordpress.com/2016/10/25/t-sql-tuesday-84-growing-new-speakers/ – yes it would be demo heavy.