You have used Claude. But which Claude?

The Claude app (claude.ai, the desktop and mobile apps) is the chat product you talk to. The Claude API is the developer platform other products are built on. Same intelligence underneath, completely different audiences and ambitions. Here is what each one is for.

Claude “The App”

This is the chat product at claude.ai, the desktop app and the mobile app – probably the most commonly used and understood one – below screenshot shows the windows desktop.

Used by everyone and anyone such as Knowledge workers, writers, analysts, students, founders. If you can type a question, you can use it.

The aim: Open it, ask, get work done. Everything is bundled in — chat, web search, file creation, connectors to Google Drive, Gmail, Slack, artifacts for code and documents, skills for domain expertise, projects for separation of areas and memory across chats. You pay a monthly subscription and Anthropic runs the infrastructure – such as free, pro, max 5x and 20x.

Claude API (the developer platform)

Raw access to the models, plus the same building blocks the app uses exposed for you to compose into your own product. This is for the developers, engineers, product teams, people building AI into their own software with agents and workflows. You write the code, manage the tokens, hooks, MCP and design the UX.

Key takeaway: if you want to build something with Claude, you want the API.

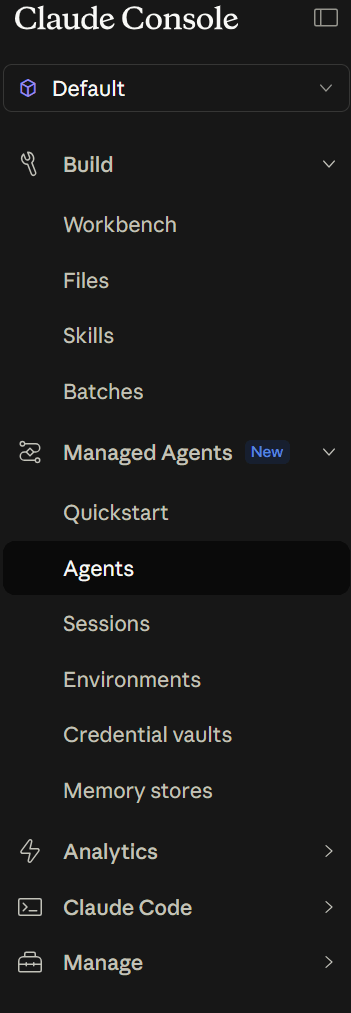

What about Claude Console?

A central location where you can do administration such as generate API keys, monitor usage / costs, set spending limits and test prompts in the Workbench before wiring them into your code. The API is what your application calls. The Console is how you manage it. You will probably need both.

Claude Code?

Claude Code is the CLI for developers. It is built on the API but you talk to it like the app — it edits files, runs tests, executes shell commands, all from your terminal. If you write software this is super useful for you too – yes it works great with the terminal but also with Visual Studio.

Summary

The app is for people who want answers. The API, console, Claude code is for people who want to build. Pick the one that matches what you are trying to do. Hopefully this helps you understand what is out there (and subject to fast change!)