Every now and again I would navigate to Microsoft’s certification page and see what / if any changes have taken place regarding certification within the Data Platform space. (Check out the link – http://www.microsoft.com/en-us/learning/certification-overview.aspx)

Nothing has changed in terms of the “pyramid” grade structures, you know, going from MTA, MCSA to MCSE which I will get to later.

However there is one change I have noticed – MCSE: Data Management & Analytics (they still have the MCSE: Data Platform and BI) where for this certificate you would need to know about Designing and Implementing Cloud Data Platform Solutions and Designing and Implementing Big Data Analytics Solutions.

It makes sense cloud, data, data analysis / mining is becoming more important as data grows, businesses can leverage these techniques to gain advantage so it’s good to see Microsoft adapt to this and change up their certifications.

HOWEVER, I am still slightly disappointed that there is still no tip of the pyramid certificate.

A bit of background, I used to hold all three MCITP and MCSE: Data Platform certificates (I failed them a few times but I got there in the end). Logically I would then look to the next step; I just feel that there should be a certificate that surpasses the level of questioning asked in the MCSE exams.

I have this one:

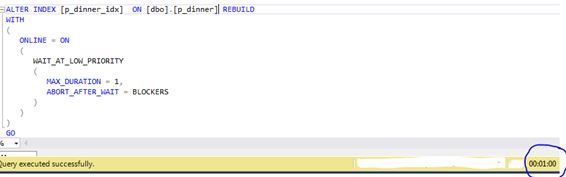

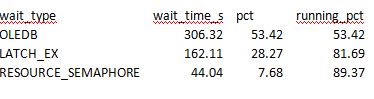

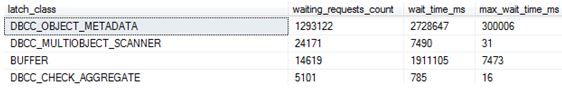

When I went to the SQLskills training on the last day we were given questions to troubleshoot and fix. They were tough, made me think hard and we had to “apply” what we learned from the last 10 days or so. These are the sorts of questions I want to evaluate myself against.

I won’t be renewing my MCSE certificate, not because of a lack of motivation to learn, if anything it’s quite the opposite. I am hungrier than ever to pick up and improve my Azure (and SQL) skills and get my hands dirty then hopefully share the knowledge that I gain within my blog.

I will say if you are new to this technology I think it’s a great way to help you get started and learn to a set plan so I would encourage you start at the MSCA, just like I did 10 years ago (It was MCTS back then).